China tech market is one of leading in the world in terms of size and value. Its cloud adoption, however, is far slower than in the west, making it the most significant unfulfilled potential of cloud computing markets in the world. This continuously narrowing gap produces countless opportunities which western tech companies seek. They are being slowed down only by two significant factors; a general disbelieve of Chinese tech leaders in public clouds, and a significant operational challenge dealing with China’s firewall which we’ll discuss in this post.

According to a recent McKinsey report:

“public-usage rates could rise more than 20 percent annually over the next three years… Chinese businesses are at different points in their cloud-migration journey”

It’s quite clear that China one of the most promising cloud computing markets on the planet. The undisputed world leader of cloud computing has already made its way into two Chinese regions; AWS in Beijing and Ningxia.

Still, migrating to a Chinese deployment, whether partially or entirely, is not merely moving your infrastructure to yet another AWS region under your account;

Customers who wish to use the new Beijing Region are required to sign up for a separate set of account credentials unique to the China (Beijing) Region. Customers with existing AWS credentials will not be able to access resources in the new Region, and vice versa.

-Announcing the AWS China-Beijing Reion

Both the regions in China are operated by external vendors to “comply with China’s legal and regulatory requirements.”

# TIP

The two regions do not differ by a lot, however, the types of instance families they hold may vary. Before choosing one, make sure the relevant resources you’re expecting is available. To give an example; in a recent client’s case, we found out that Beijing doesn’t provide any T3 instances at all. We had to switch regions as there was a small flexibility of requirements.

Kubernetes

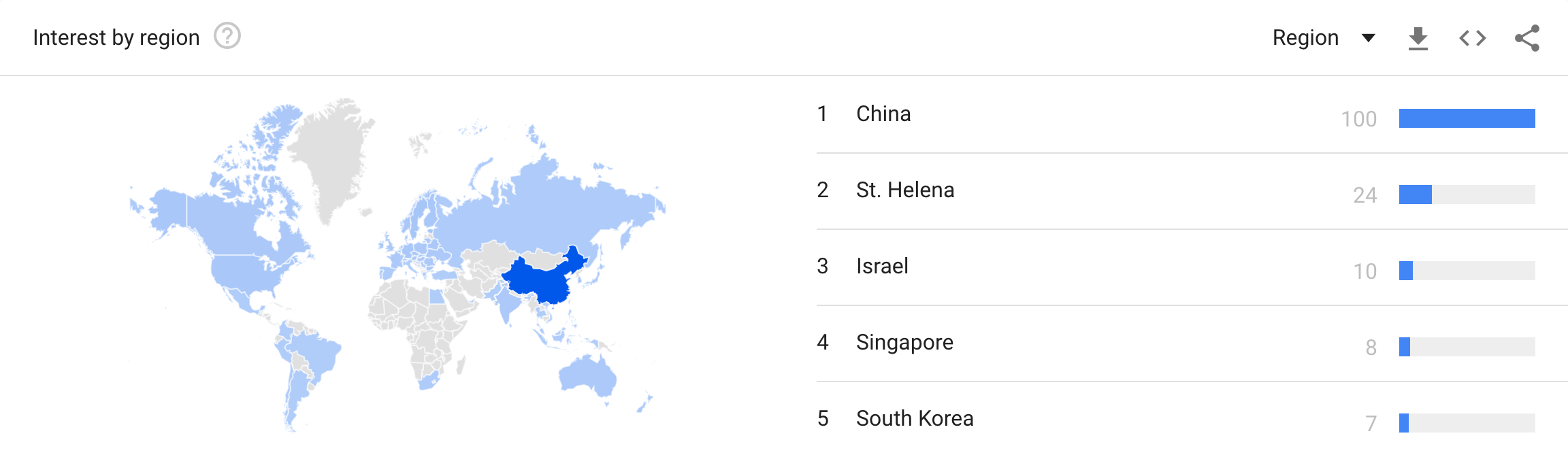

So much has been said and written about K8S (in its abbreviated name); it’s today’s leader when it comes to container orchestration. It supports some of the largest and busiest production deployments in the world. Companies run after it sometimes without even having a reason other than “Everybody else does it.” Leaving the ideas of why and how aside, Kubernetes is a trend, and it’s a massive** trend** in China:

Considering the magnitude of the world trend, and the one in China specifically, it’s no wonder that K8S-based products are searching for their way in. This “way in” needs to be planned and well prepared for.

Convinced? Let’s dive in.

The great firewall of China

GFW is the combination of legislative actions and technologies enforced by the People’s Republic of China to regulate the Internet domestically. Its role in the Internet censorship in China is to block access to selected foreign websites and to slow down cross-border internet traffic. The effect includes: limiting access to foreign information sources, blocking foreign internet tools (e.g. Google search, Facebook, Twitter, Wikipedia etc.) and mobile apps, and requiring foreign companies to adapt to domestic regulations.

Well described by Wikipedia, the great firewall of China serves its purpose, which usually means bad news for developers trying to extend deployments beyond the wall…⚔ <a* Jon Snow emoji* 😉>. Here are some of its drawbacks, derivatives, and solutions;

Speed

The networking infrastructure and internet speed are not excellent. This fact affects every part of communication; from Docker image fetching, through Helm charts and to general SDK libraries, communication in China is slow.

Even, when using mirrors (covered in depth below), speed does not improve, sometimes it also deteriorates; mirroring only solves access issues.

So how do you mitigate issues created by speed?

-

Request failure handling Every request or command, whether a helm install or a go get should be nested under a retry mechanism to handle failed requests. The Chinese firewall responds inconsistently, forcing you to be the adult in this relationship and handle its shenanigans.

- Resilient microservices

Pods should be provided with logical readiness and health probes, but failing to meet these, shouldn’t mean a failed deployment. Pods that fail to reach a healthy state due to other unhealthy dependencies should be designed to keep trying. If possible, prepare fallback procedures, considering the environment around is always unstable.

- This, by the way, is an excellent practice to implement everywhere.

-

Local packaging Since communication is relatively slow and inconsistent, a good option is to bring your packages together with the deployment. The concept is taken from “Black Site” deployments, where connectivity to the outside world is completely blocked, and deployments of applications are forced into being completely independent. Libraries, binaries, and even code can be packed in various ways and shipped as part of the installation package.

- Cache proxy servers A twist of local packaging is having a small cache proxy server at your disposal. e.g., Having a docker cache pod as the gateway for the local docker daemon, creating a smart layer cache to save time and networking load. The same can be done with NPM, PIP, or your own choice of flavor.

Resolving

Not only it intentionally slows down traffic; the firewall randomly replies DNS resolvings that may differ from one another. So, any request to a remote service outside China cannot be 100% trusted to be “available.” Resiliency, failover, and always preparing for the worse as listed above, are just as relevant when taking into mind the unreliableness and unexpected responses of the Chinese DNS.

Mirroring

A word of warning: Chinese mirrors (and ECR) are horrifying in terms of speed and interaction. Consider that when building images (size, layers, and smart caching), as well as when developing automatic processes that wait for these images to be downloaded. E.g., a Kubernetes cluster that waits for its pods to become healthy might wait over 10-times the period required when pulling on a western platform.

Public

Many of the public registries used around the west are block behind the GFW; DockerHub, GCR, Helm repositories, Quay and so on.

Some of them are operating their mirror like DockerHub, and Azure, Alibaba, and friends are mirroring many others. Here’s a list of some of them:

-

Quay.io: quay.azk8s.cn

-

GCR: gcr.azk8s.cn

-

K8S GCR images: registry.aliyuncs.com/google_containers

-

Docker Hub official: https://registry.docker-cn.com

-

Docker Hub Azure: dockerhub.azk8s.cn

# TIP

You can add “https://registry.docker-cn.com” to the registry-mirrors array in /etc/docker/daemon.json to pull from the China registry mirror by default.

-docker.com

Private

For private images, there is also a range of options. One excellent choice is AWS ECR. It is provided as a service in China where images can be pushed and pulled. ECR requires authentication that grants temporary 12-hour keys. This means that when used with K8S, you either have to grant the instances with an IAM Role to use the service or an authentication job that runs in the cluster. The plus with having such a job is having the option to extend deployments beyond AWS, and have external bare-metal resources use private ECR images regardless of their location. Here’s an idea for such a job.

HELM

Dynamic changes of Kubernetes components immediately turns on the red light of HELM. If you’re not using HELM for your deployments and cluster management,

-

You should 🙂

-

You may want to get familiar, [here’s a recent post to get you up to speed](https://medium.com/prodopsio/a-6-minute-introduction-to-helm-ab5949bf425)

-

You can skip this section of the post

With HELM things are a bit different; while the *stable *repo is defined with a default upon initialization, other widely used repos (like the *incubator *for example) require an addition to the daemon, both of which are kindly mirrored by Azure in China:

# Stable repo mirror

# [https://github.com/helm/charts/tree/master/stable](https://github.com/helm/charts/tree/master/stable)

#

helm repo add stable [http://mirror.azure.cn/kubernetes/charts/](http://mirror.azure.cn/kubernetes/charts/)

# Incubator repo mirror

# [https://github.com/helm/charts/tree/master/incubator](https://github.com/helm/charts/tree/master/incubator)

#

helm repo add incubator [http://mirror.azure.cn/kubernetes/charts-incubator/](http://mirror.azure.cn/kubernetes/charts-incubator/)

# After added, you can:

$ helm install --name my-release incubator/some-incubated-chart

Serving Content

HTTP and K8S ingress handling

HTTP content providing is not automatically allowed in China and requires a government-issued approval called ICP (see Alibaba’s blog post). In the meantime, if you’re not planning to wait or accept not being able to create new HTTP endpoints on your free will, the way to go is to change ingress rules for K8S using path-based URLs instead of the host. When approval is granted for a specific endpoint, it will serve the rest of the cluster with any internal application endpoint.

Before deploying any Chinese hosted website or platform, all organizations must first apply for an Internet Content Provider (ICP) registration.

-Alibaba Cloud

Serving from western S3

While content providing in China is not allowed by default, the western global S3 service is not blocked and works fine (to date) from China AWS regions. Using western S3 is useful when you store downloadable file which you’d like to provide publically open access. China resources can fetch western data by HTTP. The alternative is using s3:// URI and IAM roles to fetch the stored files from the Chinese S3 service (which cannot be served publically).

Alternatives

Extending western deployments to China is not an easy project. When deployments are complex and ever-changing, the number of changes required when counting in Chinese environments grows in a factor. There are other alternatives that China-based customers may consider:

-

VPN — many Chinese companies work with some VPN tunnels for specific workloads. The standard endpoints are going through Hong Kong and Singapore. If such a tunnel is allowed/approved, many resources will become available. However, lousy networking configurations is often a pitfall, and instead of exposing more, tangled settings keep the environment in the dark. Takeaway: Do try to offer or use an existing VPN, but if that messes up the communication,** it’s 95% of times DNS related**.

-

Going hybrid — As mentioned above, not everything is blocked behind the firewall. With the right mechanism around a deployment, you can extend some of your services outside the firewall, using western deployed resources. Of course, this has to stand the regulations and constraints defined by the customers, but it is most definitely an option. As long as you maintain an understanding of the DNS resolving, failures, and slow speed described above, you’re good to go.

That’s it.

As the Theory of Constraints (read about it!) teaches us, The Goal is to generate profit a.k.a making money. Consider this simple fact when migrating a deployment to China. Your R&D division is about to invest a substantial period in adjustments, refactoring code, and dull waiting for the Chinese network to respond. Time is money as we all know, but it’s often miscalculated. As complicated as the migration can be, it’s very much possible and highly rewarding. Both in terms of the technological challenge, but more so in the opportunity to work with one of the most advanced tech markets in the world.

Hopefully, I’ve helped anyone out there dealing with this non-conventional environment, avoiding the pits I’ve fallen in and the mistakes I’ve made.

Useful, relevant read

Public cloud in China: Big challenges

An 8-minute introduction to K8S: Core concepts, features and building blocks

A 6-minute introduction to HELM: Simplifying K8S application management

DevOps explained using ToC Logical Thinking Process